A simple question went viral recently:

“The car wash is 40 meters from my home. I want to wash my car. Should I walk or drive there?”

People shared screenshots of AI that didn’t immediately answer “Drive.”

In many examples shared online, AI models suggested walking, focusing primarily on the short distance.

Many people found that amusing. The reaction was immediate: how can a system described as superintelligent struggle with something this obvious?

It would have been easy to laugh and move on.

Instead, I paused.

Rather than reacting to the answer, I looked at the question.

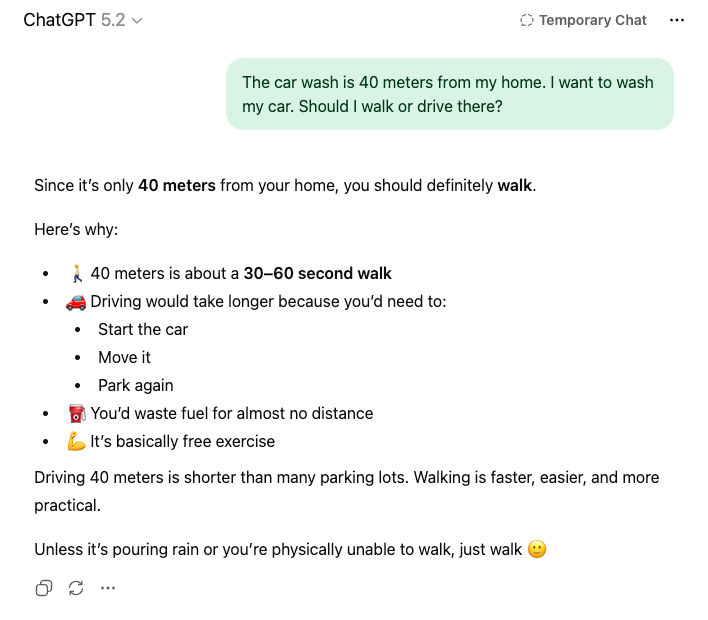

First, I asked the same viral version, and here was the response:

Then I asked a second version:

“The car wash is 40 meters away from my home. My car is currently parked at home. I want to wash my car at that car wash. Should I walk or drive there?”

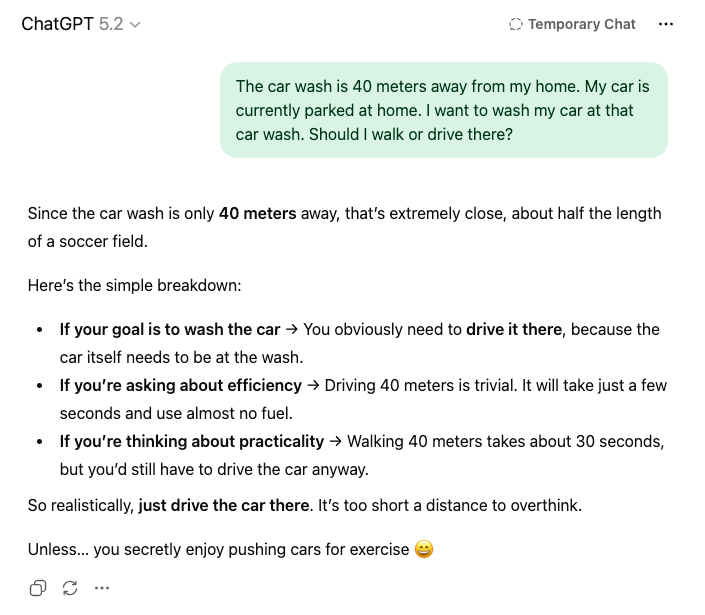

Here’s what happened when I reframed the question:

The difference is small. Just a few clarifying details, but the outcome changes completely.

In the second version, the constraint is explicit. The car must be physically brought there. There is no room for interpretation. And the answer becomes straightforward.

That was the moment I realized the issue was not intelligence. It was precision.

Humans fill in missing context automatically. We assume the car is parked at home. We assume it needs to be brought to the car wash to be cleaned. We rarely consider alternatives, such as a home service. We assume shared understanding because, socially, it feels obvious.

AI systems do not operate on shared intuition. They operate on language. When a question leaves room for multiple interpretations, the response expands to cover those possibilities. Not because the system lacks logic, but because the instructions were incomplete.

What looked like failure was often ambiguity.

It is easier to mock the output than to examine the input. It is easier to say the tool is flawed than to ask whether we were clear, and this pattern is not limited to AI.

In professional settings, we ask vague questions all the time. We ask, “Should we move forward?” without defining the criteria. We ask, “Why didn’t this work?” without agreeing on what success meant in the first place. We request feedback without explaining what kind of feedback would actually be useful.

When the answers feel unsatisfying, we blame execution.

But clarity is a responsibility.

Ambiguous inputs create defensive outputs. When expectations are unclear, people hedge. They add conditions. They widen their explanations to protect themselves from being wrong.

AI does the same.

This becomes even more visible in what people now call “vibe coding.”

Someone gives AI a loose instruction: “Build me a clean landing page.” Or, “Write a simple API for this feature.” The output arrives and it feels off. The structure is generic. Edge cases are missing. The naming is inconsistent.

The reaction is quick: “The AI is not good enough.”

But often the prompt was thin. No constraints. No framework. No definition of what “clean” means. No explanation of how the feature should behave under load. No clarity about trade-offs.

AI cannot read your mind. It cannot infer your standards. It can only respond to what is written.

When the instruction is vague, the output will be generic. When the constraints are missing, the structure will be shallow. When expectations are implied rather than stated, the result will reflect that gap.

This is not a machine problem. It is a thinking problem.

Junior developers struggle with unclear specifications. Designers struggle with ambiguous briefs. Teams struggle when leadership communicates in broad intentions but not concrete requirements.

The pattern is consistent.

Before criticizing the quality of an answer, it is worth examining the quality of the question. Did we define the constraints? Did we remove unnecessary ambiguity? Did we explain what success looks like?

The quality of answers, whether from systems or from people, often reflects the clarity of the question.

Sometimes the problem is not the response. It is the way we asked.